It has been over a year since the US State Department said they would answer my FOIA request on fake opinion polls in Iraq

A spokesperson also refused to answer my questions about it

On February 3rd, 2021 I sent a Freedom of Information Act request to the US State Department for internal documents discussing potentially fabricated polling from Iraq. I sent them this request after corresponding with Michael Spagat, a professor at Royal Holloway University, London, who has alleged that some of the polls conducted by contractors for the State Department between 2006 and 2010 contain fabricated data. The proof Spagat presents is, in my opinion (and I have to say that for legal reasons), undeniable. The story has been lightly covered elsewhere, including by Natalie Jackson, formerly of Huffington Post’s Pollster.com, and Andrew Gelman at his blog.

Spagat’s research, as well as other stories of fabricated international polling data and examples of misuses of polling at home, constitute part of Chapter 4 of my forthcoming book STRENGTH IN NUMBERS. Since I have passed the anniversary of my FOIA I thought I’d share a bit of that story.

In the early-2010s, pollsters were just starting to learn about the extent of potential fabrication in their data. Some methods of falsification took the form of “curbstoning,” or interviewers making up small numbers of fake conversations with people while they sat on the curb of a street. But the broader and more concerning method of fabrication is called “near-” and “total-duplicates” of survey data. This is where someone who has access to one individuals’ responses copies them for several or dozens of additional “people” in the survey, modifying only some (or, in some dumb cases, none) of their responses to try to differentiate them.

Researchers at the time estimated there was a significant amount of fabricated interviews in about 20% of international surveys, though there is some debate over the precision of that estimate. Notably, the Pew Research Center believes the share of potentially fabricated data is probably lower. (“Significant” means a survey has duplicates or near-duplicates totaling at least 5% of total responses.)

Some of the companies that were collecting data analyzed by these researchers also conducted surveys for major news outlets — and the US State Department. Of course, 5% of 20% may sound like a small fraction, but we cannot underestimate the potential impact of that fabrication. One example of how fake or faulty survey data can be used to influence decisions comes from the Washington Post’s Afghanistan Papers:

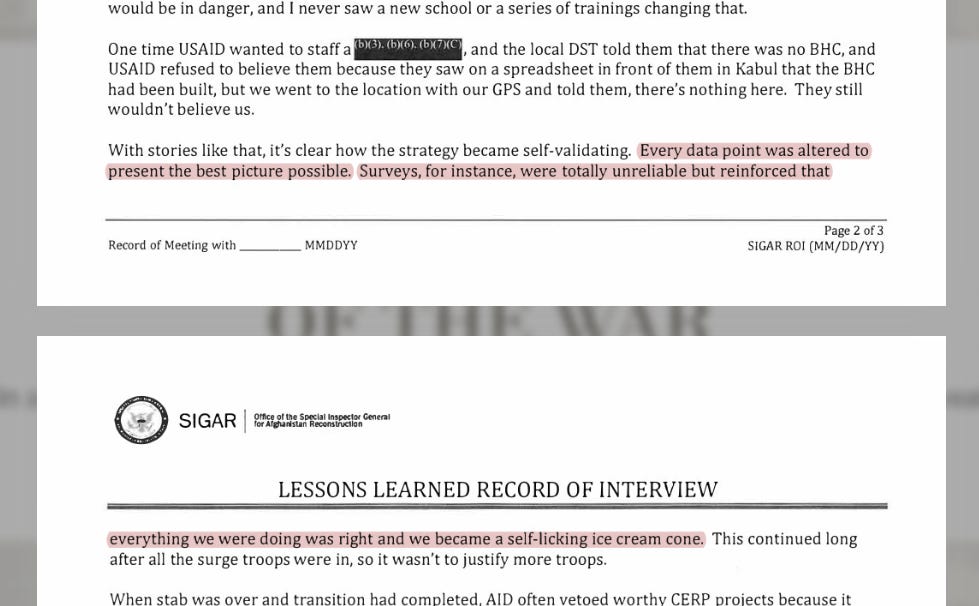

“Every data point was altered to present the best picture possible,” Bob Crowley, an Army colonel who served as a senior counterinsurgency adviser to U.S. military commanders in 2013 and 2014, told government interviewers. “Surveys, for instance, were totally unreliable but reinforced that everything we were doing was right and we became a self-licking ice cream cone.”

Yeah! That actually happened! The interview is recorded in government documents! Here’s a screenshot from the Post:

We think the problems introduced by data-fabrication could be quite high. If the US government in Washington DC was making decisions based on faulty data, we want to know about that. Interviews with several people working at the State Department suggest this is possible.

As part of our work to assess how fabricated State Department data could have been used to shape decision making in the US government, we asked them to provide (a) reports mentioning findings from the data and (b) reports on investigations of potential fabrication. We know the latter exists because the director of the Office of Opinion Research at The Bureau of Intelligence and Research (INR/OPN) gave a presentation about their efforts to investigate and prevent fabrication at a conference of pollsters in 2015!1

So, here is the text of the FOIA request we sent to State (for now, I have removed the names of the suspect survey firms, but you can find them with an easy Google, or in Chapter 4 of Strength in Numbers, or at the linked Spagat paper above):

Request: Reports written on public opinion polls conducted in Iraq for the Department of State between 2002 and 2016. Specifically, I am seeking information on two types of reports: (a) reports in which findings from these polls were disseminated to other officials; and (b) reports over whether the data collected by these firms was fraudulent or fabricated. The latter report would have been commissioned and completed in the decade beginning in 2010.

It has been a year and 33 days since I received an email from the State Department saying they accepted my request and would get back to me. During that time, the American Association of Public Opinion Research has released its own report on the methods and potential scope of fabrication, which you can read here. It does not name names.

Why the delay? It is possible that my request was too broad, requiring extra time to be processed. But a year seems unreasonable. Plus, Spagat has also sent in his own request, with a narrower date range and more specific language, and has not heard back. So while a general delay in processing time is understandable, it is also sure seems like State simply does not want to help us uncover this. When I spoke with their press secretary in 2021, I made some general progress toward interviewing the director of INR/OPN, but they got spooked when I asked for details and only sent me this statement:

The Department of State’s Bureau of Intelligence and Research has an enduring commitment to the truth. We therefore make every effort to ensure that our products are thoroughly and comprehensively vetted.

We have not concluded that any survey data from State Department sponsored research in Iraq was fabricated or fraudulent.

We contract with reputable, in-country firms who are experienced in social-marketing research, and our analysts often travel to their regions of responsibility to audit research facilities and contractor capabilities, and to observe the implementation of surveys and focus groups

Well, they can say that all they want. But the data (online here) undoubtedly contain fraudulent entries. You don’t even need to take my word for it: here is an audio recording of a State Department official named David Nolle admitting it! There “is a lot of data fabrication,” Nolle says, in “lots of overseas opinion polls conducted every year” that forced the Office of Opinion Research to institute a “major quality-control program” that included firing “19 interviewers, 2 supervisors and 2 key-punchers who were all involved” in a coordinated scheme to fabricate data for an external contractor.

This means the State Department’s statement doesn’t make much sense, does it? I guess they are free to say whatever they want about not “concluding” fabrication on their own accord. Maybe they have their own suspicions but no conclusions. But the strange thing about all the obfuscation is that the State Department knows we have this information. They released their fraudulent data via a FOIA request in 2010! We can see the patterns of fabrication! I guess there could be huge costs to their reputations if some of the data the US Government was using to make decisions during a war were fake. Why not be an open, honest and transparent member of public discussions on this?

So, anyway, I would very much like to hear back on our FOIA. If anyone at INR/OPN is reading this…

You can read more about this saga in my book, which comes out on July 12th. Please pre-order it! I will write again with an update if I hear anything before then. We are considering taking the legal path if we do not.

Support this blog

Thank you for reading this post. If you enjoyed it, please share it using the button below. I also publish exclusive content on politics, polls, political science, and democracy for people who who pay for a subscription. You can subscribe using the button below.

Faranda, R. (2015). The cheater problem revisited: Lessons from six decades of State Department polling. Paper presented at New Frontiers in Preventing, Detecting, and Remediating Fabrication in Survey Research conference, NEAAPOR, Cambridge, MA.

This is amazing! You might want to consider reaching out to the Reporters Committee for Freedom of the Press's Legal Hotline. They're amazingly helpful in navigating these kinds of issues, and they often litigate to help journalists get the documents they're seeking: https://www.rcfp.org/legal-hotline/

By the way, fabricating public opinion is a major crop of GOP administrations. Do you remember this one, about the 15-year-old woman who testified to Congress that she was a Kuwaiti nurse and she witnessed Iraqi soldiers throwing babies out of incubators and leaving them to die on the floor? And it turned out that she was the daughter of the Kuwaiti ambassador and she had been coached in what to say by a public relations firm that was working with President George H.W. Bush to get Congress to vote to approve the Gulf War. The testimony was widely publicized, and was cited numerous times by United States senators and President George H. W. Bush in their rationale to support Kuwait in the Gulf War.. https://en.wikipedia.org/wiki/Nayirah_testimony#:~:text=In%20her%20testimony%2C%20Nayirah%20claimed,leave%20the%20babies%20to%20die.