Artificial intelligence and "big data" cannot replace public opinion polls | #213 - April 10, 2023

Plus, other links from vacation

Happy Monday, everyone.

I am back from two weeks of vacation, which we spent in the Blue Ridge Mountains, and where I collected a fair number of links to think about and write about upon my return. So in this week’s newsletter let’s talk briefly about AI and polling, ticket splitting, the Wisconsin Supreme Court election, and more.

1. Artificial intelligence cannot replace public opinion polling

Here is a fun story that came to me by way of Twitter. It goes like this: Robert Groves, a survey methodologist, sociologist, and the Director of the Census Bureau from 2009 to 2012, once told a story about how a team of government data scientists tried to use statistical modeling to come up with approximations of several economic indicators without relying on government surveys, which are massive, complicated and very expensive.

One day, the team came to him with a solution: given enough “big data” on the population and enough methodological tinkering, they could come up with accurate predictions of labor force participation and employment statistics without taking a direct survey. Groves responded as I imagine any cost-burdened government survey scientist would: "Great, we can stop running economic surveys.”

But then, according to the story, the team replied: "No, you can't! We use those surveys to calibrate our models!"

Presumably, the data scientists’ models only worked because they were trained to predict current employment statistics using current employment statistics as a calibrating variable, but they couldn’t get them to work either for the future or far in the past where key variables were not available or when relationships between indicators were different. The important lesson here is that a lot of the “algorithms” and “big data models” etc that are promised as solutions to issues with old methods do not work in a vacuum — and many, in fact, rely at least partially on those old methods to work accurately.

Someone sent me this story after I commented on this article from Atlantic writer Saahil Desai. Titled “Return of the People Machine” — a reference to the first computer program designed for measuring and manipulating voters’ attitudinal data — the piece dangles for us a future where Artificial Intelligence replaces public opinion surveys: “No one responds to polls anymore,” the sub-head reads. “Researchers are now just asking AI instead.”

The upshot to the article is that some scientists have trained a popular AI model — GPT-3 — to emulate humans and “respond” to a prompt as if it were a real human respondent answering a poll. Quoting from the article:

When researchers at Brigham Young University fed OpenAI’s GPT-3 bot background information on thousands of real American voters last year, it was unnervingly good at responding to surveys just like real people would, for all their quirks, incoherence, and (many) contradictions. The fake people were polled on their presidential picks in 2012, 2016, and 2020—and they “gave us the right answer—almost always,” Ethan Busby, a political scientist at BYU and a co-author of the study, told me.

In their paper, the authors write that their “silicon sample” (as opposed to a human sample)…

…is nuanced, multifaceted, and reflects the complex interplay between ideas, attitudes, and sociocultural context that characterize human attitudes. We suggest that language models with sufficient algorithmic fidelity thus constitute a novel and powerful tool to advance understanding of humans and society across a variety of disciplines.

Desai, the Atlantic writer, puts the advantage of this AI polling “solution” this way: “A high-quality political poll can run $20,000 or more, but this particular AI-polling experiment cost the BYU researchers just $75. The People Machine, it seems, has whirred back to life.”

That is, indeed, a huge increase in researchers’ return on investment! Or it would be if it were real. The reality is not so rosy.

See, the authors of this paper did not just conjure up these profiles of voters from thin air. They fed the AI “People Machine” demographic and attitudinal data that itself came from a poll! And not a cheap poll, either; the surveys in question are the American National Election Studies polls from 2012, 2016, and 2020. Each one of these polls receives millions of dollars of funding from the federal government and outside sources. The 2024 survey, for instance, is expected to win a National Science Foundation grant of $14m! That is quite a lot higher than the $75 quoted by Desai.

In truth, the cost of the “AI polling model” is even higher, as GPT-3 had to be trained using massive arrays of computer servers looking at trillions of nodes of information to give it the “intelligence” necessary to generate the synthetic samples of voters the researchers asked for.

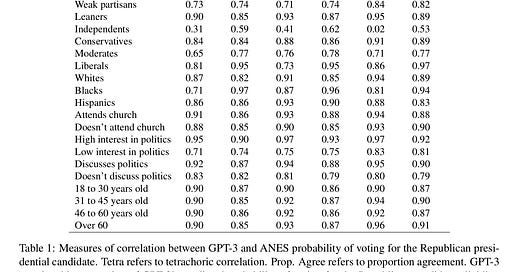

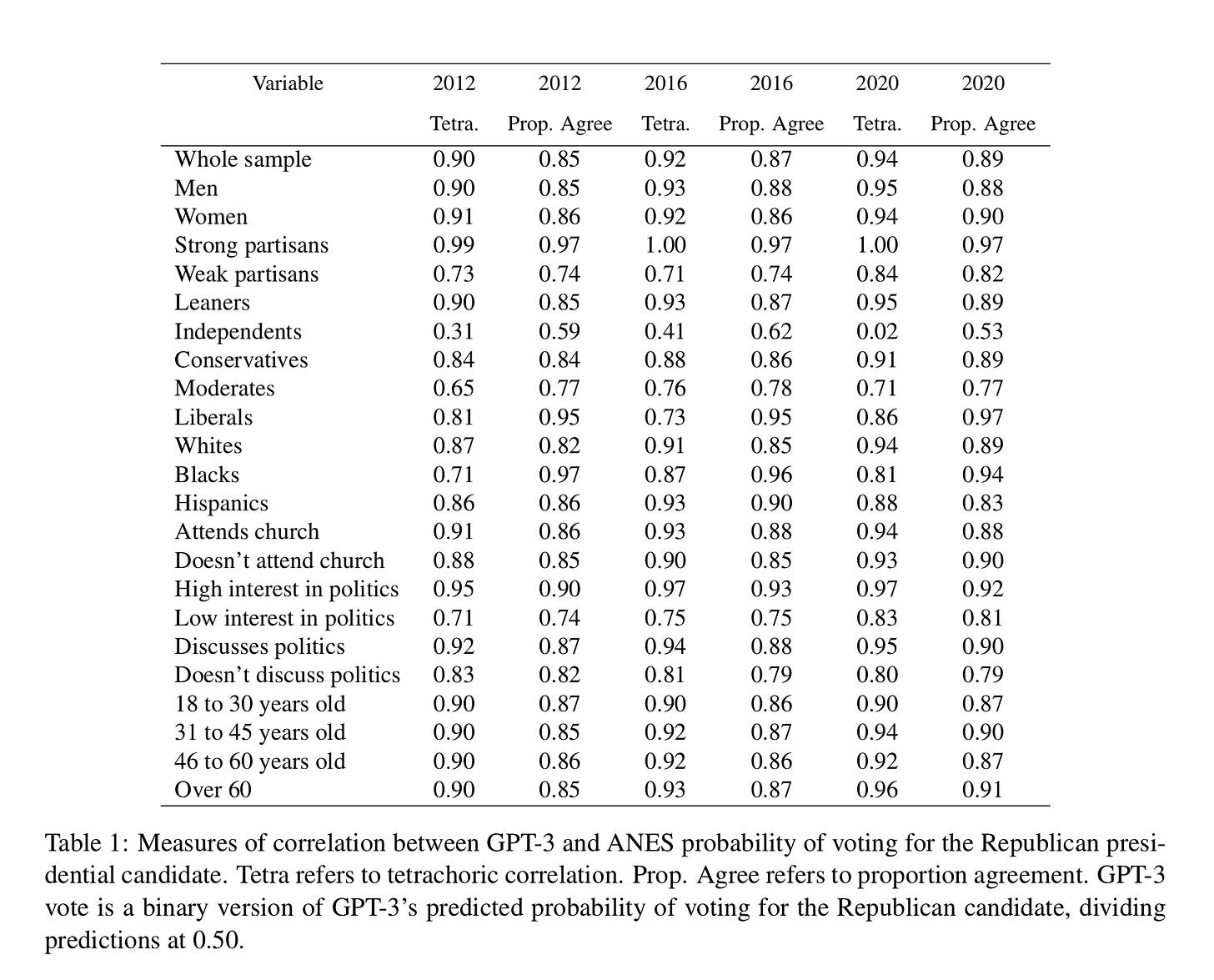

And even then, it did not do a particularly good job. The results of the GPT-3 “silicon poll” agree with the actual ANES values in 2012 only 85% of the time. And the correlation with key groups — black voters, weak partisans, independent voters, and low-engagement voters, in particular — ranges from decent to bad to downright atrocious (eg, a 0.31 correlation for political independents in 2012).

This means that the “silicon samples” are not particularly helpful for people trying to learn about the public. As Ariel-Edwards Levy, CNN’s editor of polling and election analytics, put it:

The biggest polling miss in 40 years was a 3.9 pt overstatement of Biden's support, whereas the technology of "alarming accuracy" that's going to replace it "matched the preferences of real voters at least 85 percent of the time"

In sum, we have established that AI alternatives to public opinion polls (1) themselves rely on polls, (2) cost a lot more money in research and development and resource deployment than a single traditional contemporaneous poll does, and (3) do not do a good job of representing both most Americans on average and some of the highest-value, lowest-responding groups in the public.

There is an even more insidious angle to this last point: Many poorly-calibrated predictive models of voter behavior do a reasonable job of assessing the hyper-partisan, hyper-sorted members of the public, but fall completely flat when it comes to making predictions for people with less polarized attitudes. An AI-generated profile of a suburban moderate’s views is probably going to end up looking a lot more extreme than this group is in reality.

If people turn to AI “polls” as technical solutions for putting their finger on the public’s pulse, that could end up being a costly mistake. If we are smart — and if we are lucky — we will not let AI “replace” polls any time soon.

2. “Why this extremely viral poll result might not be real”

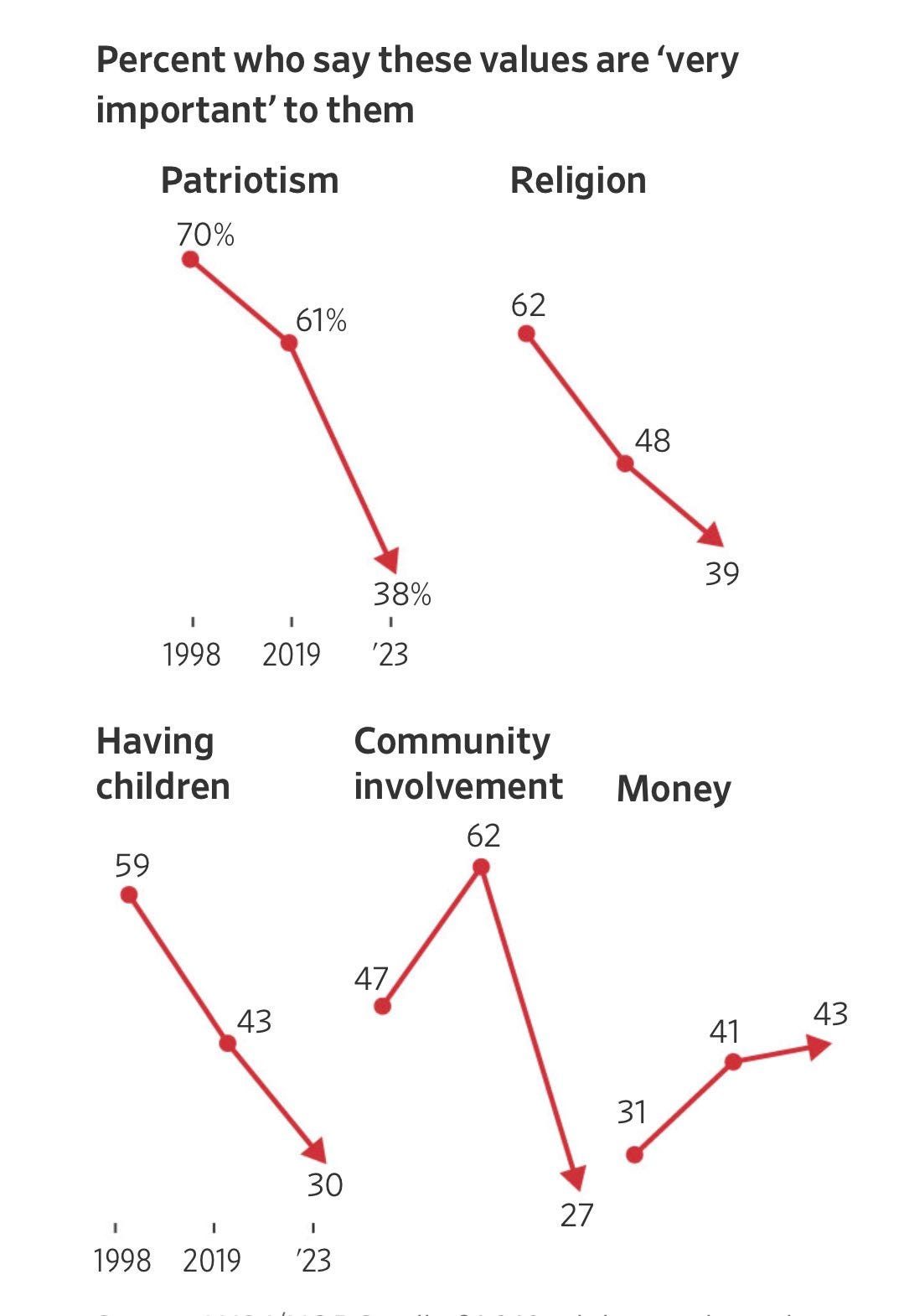

Patrick Ruffini, a Republican pollster working for the firm Echelon Insights, wrote last week about jaw-dropping new numbers from the Wall Street Journal poll showing American values of patriotism and community involvement had plummeted since 2019. In 2019, 61 percent of respondents to the Wall Street Journal’s telephone polling with NBC News said patriotism was “very important” to them. Today, in the Journal’s online polling with the National Opinion Research Center (NORC), that number is 38 percent.

Here’s the WSJ graphic:

That sure looks like American Society is disintegrating, right? Well, as it turns out, the huge 4-year decreases in these values may mostly be due to a switch in who pollsters are talking to, rather than what Americans believe. According to Ruffini:

Surveying the exact same types of respondents online and over the phone will yield different results. And it matters most for exactly the kinds of values questions that the Journal asked in its survey.

The basic idea is this: if I’m speaking to another human being over the phone, I am much more likely to answer in ways that make me look like an upstanding citizen, one who is patriotic and values community involvement. My answers to the same questions online will probably be more honest, since the format is impersonal and anonymous. So, the 2023 survey probably does a better job at revealing the true state of patriotism, religiosity, community involvement, and so forth. The problem is that the data from previous waves were inflated by social desirability bias—and can’t be trended with the current data to generate a neat-and-tidy viral chart like this.

That’s not to say that the new WSJ numbers are bad or biased (though, by definition one of these sources is “wrong” — and I suspect the online numbers are not representative of offline Americans) but that they are not comparable over time. I suspect that these issues will creep up more and more often as telephone polls become cost-prohibitive and firms experiment with new methods, so it’s something worth keeping an eye on the next time you see a stunning graph or set of statistics.

3. A real “great awokening” — or social desirability bias?

A new paper published in the American Journal of Political Science seeks to answer this question. The study, by Drew Engelhardt of the University of North Carolina at Greensboro, tests survey data with multiple complex statistical formulas (this paper is strictly For The Nerds) to see if attitudinal change is genuine or the result of underlying psychological processes pressuring respondents to answer questions in certain ways.

Engelhardt finds that the increasing racial liberalism of (white) Democrats over the last decade is both real and significant. He writes:

Using measurement models to evaluate responses to the racial resentment measure, I find evidence that genuine change appears best able to explain observed patterns. Evidence does manifest for the expressive and measurement positions, suggesting they may contribute some. Part of the difference in observed racial resentment between White Democrats and Republicans may come from varied measure interpretations. But support is inconsistent across tests and cannot fully explain observed shifts. Attitude change appears largely genuine.

4. More reading

Here is a blitz of links that are also interesting:

Thomas Edsall (NYT): The partisan divide in America comes down partially to our individual degrees of “tightness” and “looseness”

Tufts Public Opinion Lab: In a cool survey experiment, a Trump endorsement decreases a hypothetical Republican Congressional candidate’s favorability rating by 7 points

A new and good “Bluegrass Beat” newsletter from Perry Bacon Jr on the asymmetric number of Republican pro-choice swing voters in Kentucky

A map of the 2020-to-2023 shift in Democratic vote share in the Wisconsin suburbs. The entire 2024 election could be decided in these precincts of Milwaukee, Waukesha, and Dane counties; and how education polarization is helping Democrats there

New estimates of ticket-splitting in the Trump era

…

That’s it for this week. Talk to you all next Sunday,

Elliott

Subscribe!

Were you forwarded this by a friend? You can put yourself on the list for future newsletters by entering your email below.

Politics by the Numbers is a reader-supported publication. It takes a lot of time to write the free weekly edition for loyal readers. Those who want to support the blog or receive more frequent posts can sign up for a paid subscription here.

Feedback

That’s it for this week. Thanks very much for reading. If you have any feedback, you can reach me at gelliottmorris@substack.com, or just respond directly to this email if you’re reading it in your inbox.